You are looking for products in the category of “”.

Table of Contents

Recommended products regarding the topic “Rcf Art 735”

We have compared products in the section “Rcf Art 735”. Here you can find the top 16 in the category “Rcf Art 735”.

Rcf Art 735 – the most important at a glance

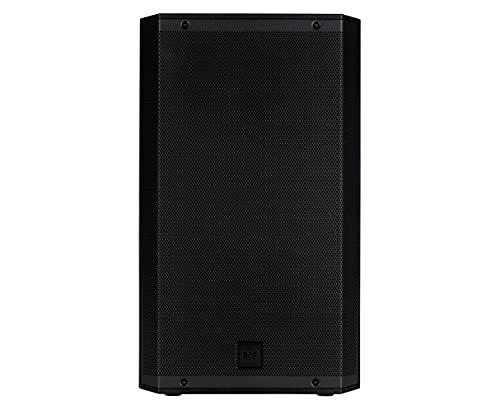

The RCF ART 735 is a highoutput, twoway active loudspeaker. It features a 12inch woofer and 1inch compression driver. The ART 735 is ideal for use in nightclubs, bars, restaurants, and other live music venues. It can also be used for DJing and other mobile audio applications.

The ART 735 is powered by a 350watt classD amplifier. The amplifier is designed to provide high power with low distortion and high efficiency. The loudspeaker has a frequency response of 45 Hz to 20 kHz. The 12inch woofer has a peak output of129 dB SPL. The 1inch compression driver has a peak output of131 dB SPL.

The ART 735 has a builtin twochannel mixer. The mixer allows you to mix two audio sources independently. The mixer has level controls and threeband EQ controls for each channel. The mixer also has a master volume control. The loudspeaker has two balanced XLR inputs and two unbalanced 1/4inch inputs. The balanced XLR inputs can be used to connect the loudspeaker to a mixer or other audio device. The unbalanced 1/4inch inputs can be used to connect the loudspeaker to a portable music player or other audio source. The loudspeaker also has a balanced XLR output. The balanced XLR output can be used to connect the loudspeaker to another active loudspeaker or to a recording device.

The ART 735 has a robust, impactresistant enclosure. The enclosure is made of tough, molded plastic. The loudspeaker is protected by a powdercoated steel grille. The loudspeaker mounts on a standard 35mm pole. The loudspeaker can also be mounted on a wall or ceiling using the optional RCF WMB wallmount bracket or the optional RCF CMB ceilingmount bracket.

The RCF ART 735 is a highoutput, twoway active loudspeaker. It features a 12inch woofer and 1inch compression driver. The ART 735 is ideal for use in nightclubs, bars, restaurants, and other live music venues. It can also be used for DJing and other mobile audio applications.

The ART 735 is powered by a 350watt classD amplifier. The amplifier is designed to provide high power with low distortion and high efficiency. The loudspeaker has a frequency response of 45 Hz to 20 kHz. The 12inch woofer has a peak output of129 dB SPL. The 1inch compression driver has a peak output of131 dB SPL.

The ART 735 has a builtin twochannel mixer. The mixer allows you to mix two audio sources independently. The mixer has level controls and threeband EQ controls for each channel. The mixer also has a master volume control. The loudspeaker has two balanced XLR inputs and two unbalanced 1/4inch inputs. The balanced XLR inputs can be used to connect the loudspeaker to a mixer or other audio device. The unbalanced 1/4inch inputs can be used to connect the loudspeaker to a portable music player or other audio source. The loudspeaker also has a balanced XLR output. The balanced XLR output can be used to connect the loudspeaker to another active loudspeaker or to a recording device.

The ART 735 has a robust, impactresistant enclosure. The enclosure is made of tough, molded plastic. The loudspeaker is protected by a powdercoated steel grille. The loudspeaker mounts on a standard 35mm pole. The loudspeaker can also be mounted on a wall or ceiling using the optional RCF WMB wallmount bracket or the optional RCF CMB ceilingmount bracket.

Bestsellers in “Rcf Art 735”

A list of bestsellers under the category “Rcf Art 735” you can find here. Here you can see which products other users have bought especially often.

- Rolling bag designed to fit Mackie Thump TH-15A and similar 15" loudspeakers

- Dual zippered design allows easy load in of speaker

- Bottom of bag has structured foam to cradle speaker

- Rugged pulled out handle and wheels

- Rugged Nylon contruction with 10mm side foam

- Interior Dimensions : 28.50 X 18.50 X 16.00 Inches. The exterior dimensions are 30.5 X 20.5 X 18.5 inches

Our Winner:

- Rolling bag designed to fit Mackie Thump TH-15A and similar 15" loudspeakers

- Dual zippered design allows easy load in of speaker

- Bottom of bag has structured foam to cradle speaker

- Rugged pulled out handle and wheels

- Rugged Nylon contruction with 10mm side foam

- Interior Dimensions : 28.50 X 18.50 X 16.00 Inches. The exterior dimensions are 30.5 X 20.5 X 18.5 inches

Current offers for “Rcf Art 735”

You want to buy the best products in “Rcf Art 735”? In this bestseller list you will find new offers every day. Here you will find a large selection of current products in the category “Rcf Art 735”.

No products found.